Sensor sizes range from Ľ” to the new 1-1/8” of the

22 or 29 Megapixel sensors

, the Pixel

(Picture Element

) is the

active site which is used to accumulate photons.

When even greater resolution is required for an application the

implementation of line scan sensors is employed. Phoenix Imaging

has implemented line scan sensor up to 16K is size producing extremely

high resolution images. The more pixels a sensor has the great the resolving power of the image

or put another way, the more pixels per image area the higher the

resolution. A

simple way to calculate the resolution of a

sensor

is to divide the sensor size by the number of active pixels. The

illustration below show the relative size of the various sensors

available and their physical dimensions. It should be noted that

the sensor size is actually smaller than it's name implies, i.e. 1/2"

sensor is not 1/2" in any dimension.

sensor

is to divide the sensor size by the number of active pixels. The

illustration below show the relative size of the various sensors

available and their physical dimensions. It should be noted that

the sensor size is actually smaller than it's name implies, i.e. 1/2"

sensor is not 1/2" in any dimension. Sensors (CCD / CMOS) are

often

make reference to an imperial fraction

designation such as 1/1.8" or 2/3", this measurement actually originates

back in the 1950's and the time of Vidicon tubes. Those who find the

specification sheets for these sensors are then even more confused about

the relationship between the fraction and the actual diagonal size of

the sensor.

These sizes were typically

1/2", 2/3" etc. The size designation does not define the diagonal of the

sensor area but rather the outer diameter of the long glass envelope of

the tube. Engineers soon discovered that for various reasons the usable

area of this imaging plane was approximately two thirds of the

designated size.

The sensors are typically manufactured as CCD

(charged coupled device) or as CMOS (Complementary Metal Oxide

Semiconductor). The CCD has been around the

longer than the CMOS and offers a few advantages over the CMOS

technology, such as the

CCD

sensors are more sensitive than CMOS resulting

in better images, especially in low light

conditions.

The CCD sensors are cleaner or

less grainy than CMOS sensors

because they less susceptible to noise

(electronic). The CMOS sensor are less expensive to manufacture

because they are based on the same technology used to manufacture most

electronic devices, i.e. computer chips. Since the CMOS technology

is similar to most electronic devices the manufactures can actually

integrate electronic circuitry on the CMOS sensor element to improve the

signal.

This integration of electronics in the sensor area (pixel

area) requires real estate that would have been used for gathering

photons, thus reducing the "active pixel size". Most consumer

cameras today use the CMOS technology and great improvements are being

implemented to produce images that are close to the quality of CCD sensors.

The CCD sensors are cleaner or

less grainy than CMOS sensors

because they less susceptible to noise

(electronic). The CMOS sensor are less expensive to manufacture

because they are based on the same technology used to manufacture most

electronic devices, i.e. computer chips. Since the CMOS technology

is similar to most electronic devices the manufactures can actually

integrate electronic circuitry on the CMOS sensor element to improve the

signal.

This integration of electronics in the sensor area (pixel

area) requires real estate that would have been used for gathering

photons, thus reducing the "active pixel size". Most consumer

cameras today use the CMOS technology and great improvements are being

implemented to produce images that are close to the quality of CCD sensors.

The old Vidicon tubes were circular in shape and

the image used by the tube was taken from the center of the tube.

Today most sensors do not have a square shape, rather they are

rectangular in shape. Early CCD and CMOS sensors had a 4:3 aspect

ratio where the horizontal axis was 4 units long and the vertical axis

was 3 units high. Today you can find sensors in a number of shapes

from square (1:1) to Widescreen (16.9). The longest dimension of

the image produced by the optical system (lens) must fit within the

image circle of the sensor. The image produced by the optical

system (lens) produces an image of the object at the focal plane of the

optical system.

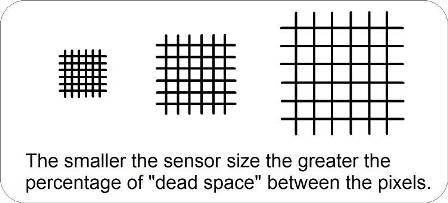

Assuming that you can get the object to fit on to

your sensor correctly we also have to consider the dead space on the

sensor. This is important if you do not want objects of interest

to literally "fall in the cracks" of the sensor. All sensors have

space that separate the pixels. This shape appears as lines on

sensor and produces a grid pattern of pixels. This space or line

width between the pixels is very similar for all sensor types and must

be considered when selecting a sensor size for your application.

Since this line width is about the same it only goes to show that

smaller sensors use a greater percentage of the sensor area with this

dead space than is found on larger sensors. If the defective

condition that we are attempting to isolate is small it has a greater

chance of "falling into the crack" of a small sensor than that of a

large sensor. This is often observed when a sensor or a component

under test is moved slightly and the defect "disappears". If the

component or sensor is moved again a small displacement the defect

"reappears" in the image area. This problem exist for both large

and small sensors but is more prevalent in the smaller sensors.

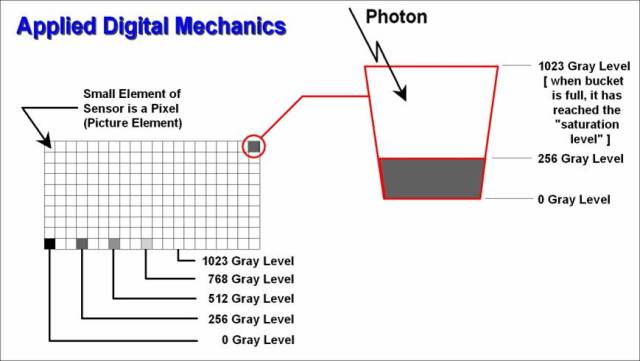

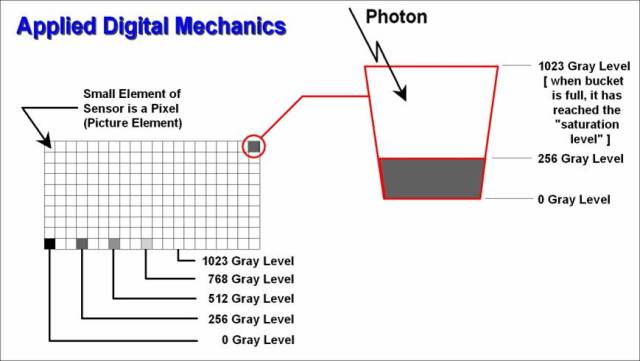

You can think of each pixel as photon collector or

a photon bucket. The more photons that a pixel collects the

greater the charge on the element and brighter the pixel appears in the

image. The sensor is designed with a maximum grayscale

sensitivity, typically sensors have a minimum grayscale resolution of 8

bits (0-255 gray levels). Better sensors often provide 10, 12 or

14 gray levels. A 10 bit system offers 0-1023 grays levels for

image depth. This means that the image of 10-bit system is 4 times

more steps than that of an 8 bit system. This is important because

the background noise of a 50 dB signal to noise ratio sensor is about 5

to 10 grays levels. That means every time that you acquire an

image using this sensor the gray level of an individual pixel can change

5 to 10 gray levels or approximately 2% of the total range of the

sensor. If you use a vision algorithm with a fixed threshold and

the gray level of defective condition is near your threshold it may be

isolated as a defect in one image acquisition and then as acceptable in

the next image acquisition. If you use a sensor with 10-bits of

grayscale resolution (depth) then the same 5 - 10 gray level variation

represents only about 0.5% of the full scale range. This

disadvantage of the using a sensor with greater grayscale resolution is

that the image is now requires 16 bits of data per pixel rather than the

8 bits. This leads to greater processing time for an equivalent

sensor area (horizontal x vertical pixels). However, it is often

more efficient use of image processing cycle to start with a better

quality image than to perform more operations to "clean up" a noisy

image. The illustration to the right shows the relative contrast

of the various level of grayscale, 0 gray level equals black and 1023

gray levels equals white (saturated pixel). It should be noted

that the typical human eye has the capability to differentiate

about 40 - 45 gray levels when show multiple gray level targets (targets

with a range appear to be the same gray level).

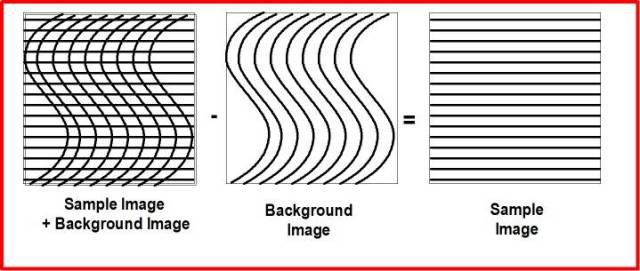

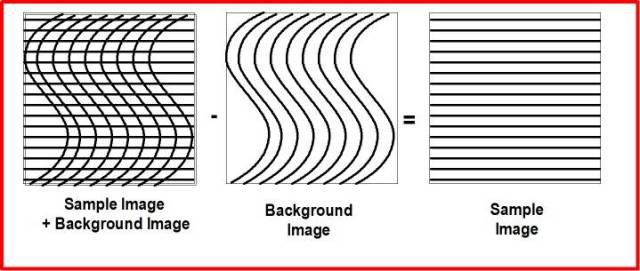

Another

important factor in a system ability to perform surface inspection is

"Background Normalization". This function can not be performed

with the aid of image processing, i.e. the human inspector does not have

the capacity to perform this operation without the use of a computer or

image processing system. The simplest form of this function is

represented by the subtraction of a background image from an image that

contains the same background information plus some additional

information. The resultant of this operation is only the

information that was not present in the background image. The

illustration to the left represents the three images, Sample Image +

Background Image, Background Image and the resultant Sample Image.

Granted the application is more complicated than this simple example but

the principle is the same except that the comparisons are performed on

pixel neighborhoods.

Another

important factor in a system ability to perform surface inspection is

"Background Normalization". This function can not be performed

with the aid of image processing, i.e. the human inspector does not have

the capacity to perform this operation without the use of a computer or

image processing system. The simplest form of this function is

represented by the subtraction of a background image from an image that

contains the same background information plus some additional

information. The resultant of this operation is only the

information that was not present in the background image. The

illustration to the left represents the three images, Sample Image +

Background Image, Background Image and the resultant Sample Image.

Granted the application is more complicated than this simple example but

the principle is the same except that the comparisons are performed on

pixel neighborhoods.

This is very simplified example of the function

will only work for basis images in which low contrast information is not

important. A more sophisticated approach is to create the

"Background Image" form the actual sample image using appropriate image

processing functions. This is part of the unique image processing

functionality of the Phoenix Imaging AVSIS™ surface inspection

algorithms. We compare each pixel in the sample image to the surrounding

pixels so that defective regions are isolated based on relative contrast

to the surrounding areas. This allows the system to isolate

defective regions even if the lighting conditions change across the

component under inspection.

The isolation of small imperfections that have

very low contrast must be performed using high bit depth sensors.

Sometimes the imperfections are only 20 or 30 gray levels above the

background on a 4096 deep grayscale image. If one is using an 8

bit sensor or image processing system the defect may not even be

visible. More bit depth results in larger images because the image

is represented by a 16 bit word. 16 bit processing usually

requires more time than the smaller 8 bit deep images therefore the

amount memory and processor speed become critical.

Another

important factor in a system ability to perform surface inspection is

"Background Normalization". This function can not be performed

with the aid of image processing, i.e. the human inspector does not have

the capacity to perform this operation without the use of a computer or

image processing system. The simplest form of this function is

represented by the subtraction of a background image from an image that

contains the same background information plus some additional

information. The resultant of this operation is only the

information that was not present in the background image. The

illustration to the left represents the three images, Sample Image +

Background Image, Background Image and the resultant Sample Image.

Granted the application is more complicated than this simple example but

the principle is the same except that the comparisons are performed on

pixel neighborhoods.

Another

important factor in a system ability to perform surface inspection is

"Background Normalization". This function can not be performed

with the aid of image processing, i.e. the human inspector does not have

the capacity to perform this operation without the use of a computer or

image processing system. The simplest form of this function is

represented by the subtraction of a background image from an image that

contains the same background information plus some additional

information. The resultant of this operation is only the

information that was not present in the background image. The

illustration to the left represents the three images, Sample Image +

Background Image, Background Image and the resultant Sample Image.

Granted the application is more complicated than this simple example but

the principle is the same except that the comparisons are performed on

pixel neighborhoods.